8 Domain Knowledge

Machine learning algorithms learn models from data without needing much input. At least compared to other modeling approaches. But what about all the domain knowledge that you already have? Having models learn from data seems like the opposite of using domain knowledge – you let an algorithm identify the relevant patterns and differentiate between signal and noise.

Domain knowledge refers to facts that have been established in a field.

Can you use domain knowledge to guide the model? This chapter shows that you can, from the standard steps of translating the problem into a prediction task to more creative means such as designing custom evaluation metrics. The chapter focuses on ways to infuse domain knowledge directly into the model. Less well-known is that you can extract domain knowledge from the model: Domain knowledge constrains the model and affects its performance, which gives us information on how valuable the domain knowledge is for this prediction task. This makes machine learning a two-way street between domain knowledge and predictive performance.

Krarah and Rattle brainstormed about the problems with the tornado prediction system. Rattle had tunnel vision and looked only for reasons in her theory of generalization. Krarah shouted in frustration: “You’re not even using the knowledge about tornadoes, which we’ve accumulated over centuries. No wonder it doesn’t work!” This call from reality was sorely needed for Rattle. But how could she integrate domain knowledge into machine learning?

8.1 Translate the question into a prediction task

The Ravens were wrong. Machine learning models are typically infused with lots of domain knowledge already, even when it may seem otherwise at first. Most domain knowledge comes from “translating” the scientific question into the prediction task. Predicting tornadoes from radar data and not from the number of people reporting knee aches might seem common sense. But as soon as you get into the details of designing the prediction task, you typically rely on deeper domain knowledge. Designing the prediction task might not always feel like infusing domain knowledge, especially when the prediction task is long established, like in weather forecasting. But for scientific problems that aren’t typically set up as prediction tasks, the translation process allows for a lot of creativity, which should be guided by domain knowledge.

A big part of the translation is creating features and targets. Whether you predict intelligence from the income of the parents or chocolate consumption [1] makes for very different models. But if you are a typical reader of this book, you already know these things. Time to get to the juicy and often overlooked means to infuse domain knowledge.

8.2 Constrain the model

Machine learning is praised for the flexibility to learn any function, but sometimes you might want the opposite and constrain the model. Model constraints are a direct way to incorporate domain knowledge into the learning process. Some examples of constraints that can be put on the relation between features and the prediction:

- Monotonicity: The model’s predictions have to increase or decrease monotonically with increasing values of the feature in question. Example: Ensuring credit risk scores decrease monotonically with income.

- Linearity: Restrict the model to learn a linear relation between prediction and feature(s). Example: Predicting rent, where the price is linearly dependent on the size of the house.

- Sparsity: Restrict the number of features to be used for the prediction. Example: In genomic studies, identifying a small subset of genes responsible for a particular disease.

- Smoothness: Restrict the predictions to smoothly change when the input changes. Example: Predicting temperature as a function of altitude, where abrupt changes are not expected.

- Cyclicity: A smoothness constraint that ensures that the predictions for the low and high end of a feature “meet”. Example: Predicting electricity demand based on the time of the day, considering the cyclical nature of a day, including a smooth transition from 23:59 to 00:00.

- Range constraints: Restricting the domain of the predictions. For example, pH levels of a solution can only be between 0 and 14.

How do you infuse these constraints into your models? How do you force a support vector machine to model one of the features linearly, but not all the other features? How do you make sure a random forest becomes sparse? Sometimes you can work with model-agnostic methods, such as feature pre-processing:

- To obtain sparse models, use a feature selection step as pre-processing, followed by your usual machine learning pipeline.

- To model a feature cyclically, transform the feature into two features with sin and cos transformations [2].

However, for other constraints, it is necessary to limit the model classes to ones that can handle the constraint. For instance, if you want your models to have monotonicity constraints for some features, you should only use model classes such as:

- Linear regression models.

- XGBoost, a library for a gradient boosting algorithm, which allows setting a monotonicity constraint.

- Neural networks with monotonicity constraints [3].

The more constraints you add, the smaller the pool of eligible model classes becomes. And the more specific the constraints, the more you depart from well-trodden paths, and you’ll find yourself using untested code from obscure machine learning publications instead of scikit-learn and other well-established machine learning libraries.

If you find yourself wanting many model constraints that can’t be enforced in a model-agnostic way (e.g. feature engineering), you might want to look at frameworks and model families that are constraint-friendly, but also well-established:

- Model-based boosting [4]: A gradient-boosting-based approach allowing you to pick constraints for each of the features.

- Deep neural networks: You can design many constraints through choices of the neural network architecture, loss function, and how the network is trained.

Regardless of whether you add constraints through feature engineering or restrict the model classes, ensure to also fit an unconstrained model. As stated in the beginning, domain knowledge doesn’t only flow in the direction of the model, but model performance may tell you something about the predictiveness of that domain knowledge. By comparing the performances of constrained and unconstrained models, you can evaluate the constraint. A decrease in predictive performance when adding constraints might suggest that the domain knowledge behind it isn’t as robust as initially thought (unless you can argue otherwise).

8.3 Design your performance metric

There’s a temptation to measure model performance with an off-the-shelf metric such as the mean squared error or the F1 metric. An off-the-shelf metric can be fine, but you might be better off designing a custom metric. The performance metric is an excellent, but often overlooked, way to incorporate domain knowledge into the model.

Poor modeling choices, lack of hyperparameter tuning, or bad features result in low predictive performance. That means the performance evaluation acts as a warning system. But if you’ve put an unsuitable warning system in place, there is no “meta-warning system” that will alert you. Except for reality, because at some point you realize that the model doesn’t fulfill its ulterior purpose. The lack of feedback makes it important to pick or design the right evaluation metric. Every metric makes judgments about how bad certain prediction errors are. For example, the mean squared error \(\frac{1}{n}\sum_{i=1}^{n}(y^{(i)} - \hat{f}(x^{(i)}))^2\) comes with the following judgments:

- Symmetry: Missing the outcome by -1 is as problematic as being off by +1.

- Equality: Each data point’s prediction is equally important.

- Non-linearity: Being off by 2 is four times as bad as being off by 1.

Designing these “judgments” is an opportunity for leveraging domain knowledge. You might weigh certain errors higher, weigh a subset of your data higher, or anticipate distribution shifts.

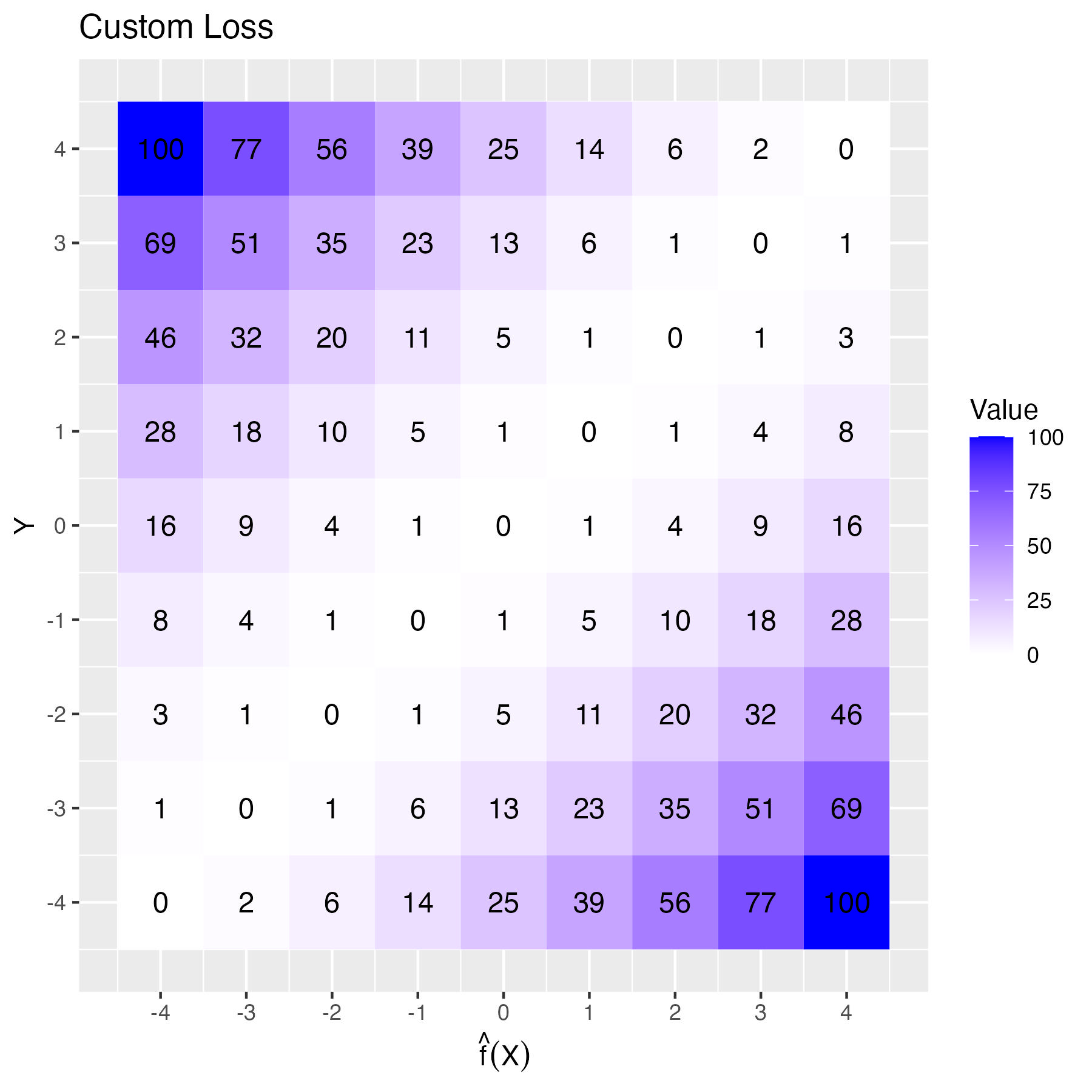

Let’s illustrate this with an example. Goschenhofer et al. [5] developed a wearable-based Parkinson’s severity monitor that predicted disease severity (\(y\)) from severe slowing of movements (-4) to okay (0) to severe excessive movements (+4). Together with medical doctors, the authors identified three requirements for the performance metric: non-linearity, asymmetry, and lack of translation invariance. The metric should penalize larger errors more heavily. Particularly, errors with a sign change should be more costly. If the actual outcome is -1, a prediction of +1 is worse than -3 because getting the direction wrong is more harmful to the patient than an overestimation. Furthermore, diagnostic errors for patients with severe symptoms should have greater weight than errors for patients with mild symptoms. This is what the new performance metric looks like:

\[\mathbb{P}(Y,X,\hat{f}) = \frac{1}{n}\sum_{i=1}^n\left[\frac{y^{(i)}}{4}\alpha + sign\left(y^{(i)} - \hat{f}(x^{(i)})\right)\right] \left(\hat{f}(x^{(i)}) - y^{(i)}\right)^2\]

At its core, it is the squared loss between model prediction and ground truth label but multiplied with a factor controlling over- and underestimation, steered by the parameter \(\alpha \in [-1,1]\), and a sign error penalty. This controls the symmetry (negative \(\alpha\) penalizes underestimation, positive \(\alpha\) penalizes overestimation, and 0 means symmetric loss). Based on feedback from medical experts, the authors chose \(\alpha=0.25\). By multiplying \(\alpha\) with the true label, the true label controls the direction of the asymmetric penalization. For example, classifying a true y=-1 as -3 “costs” 4, but if you classify it as +1, which is also 2 units apart, it costs +5. That’s the custom “sign error” penalty of the metric. Figure 8.1 visualizes the loss function.

8.4 Align loss and evaluation metric

Ideally, the metric you choose can be used as a loss function, the function that is directly optimized during the training. However, this might not always be possible:

- The performance metric might not be differentiable, but, for example, training neural networks requires a loss function with a gradient.

- Some model classes have a “built-in” loss function, like the linear regression model which optimizes the squared loss by default, or decision trees that optimize the Gini metric.

- The performance metric might be difficult to optimize because it is non-convex or infeasible to compute.

- The performance metric might only work on an aggregation of your data (e.g. F1 score), but the model requires an instance-wise computable loss function.

Here are some practical tips for working with loss functions and performance metrics:

- If possible, design the performance metric so that it is convex and has a gradient. This way, you can use it directly as a loss function, at least for some model classes such as neural networks.

- If you can’t develop the perfect loss function, at least align the loss function with the performance metric: Do they at least have the same optimum? Do they model the same (dis)similarities? Do they both have the same scaling? Think linear versus squared.

- Ensure that hyperparameter tuning and model selections are based on the performance metric and not the loss function.

- If you have different options for loss functions, you can treat the loss as a hyperparameter.

- Even if the loss function optimized by some model class seems like a mismatch, train the model anyway and let the model selection process decide. In the case of a huge mismatch between loss and performance metrics, it should show up in the performance evaluation.

8.5 Make it physical

The loss function is also a means to model physics. Many physical processes are guided by partial differential equations (PDEs). These equations are relevant in predicting the weather, simulating the climate, understanding how aircraft fly, and understanding fluid dynamics. However, simulating PDE-based systems can be computationally costly and slow. Wouldn’t it be great to use machine learning instead?

At first glance, these equations seem the opposite of what machine learning entails: They represent explicit physical laws, whereas neural networks are often seen as a mess of matrix multiplications. With physics-informed neural networks (PINNs) [6], however, you can make a neural network learn physical laws/PDEs as well, making the neural network an emulator of a physical process. This leverages the property of neural networks being universal function approximators [7]. The trick lies in using a loss function that minimizes a two-part MSE:

\[MSE = MSE_u + MSE_{\hat{f}},\]

where \(MSE_u\) corresponds to the initial and boundary conditions of the system, and \(MSE_{\hat{f}}\) is the squared error between model output and the output of the original differential equations, based on collocation points, which are points in the domain where the PDE is enforced. Instead of purely emulating the process, physics-informed neural networks can also be used to discover the parameterization of PDEs from noisy measurements of the system [8].

Alternatively, instead of encoding PDEs via the loss function during training, you can also encode physics constraints directly into neural networks, resulting in what some refer to as Physics-encoded Neural Networks (PeNNs).

8.6 Considering various evaluation metrics

Supervised machine learning has a drawback: the evaluation is typically based on just one dimension, the performance metric. But life is never that simple! You might have multiple metrics that you care about. Fortunately, it is possible to train models that optimize different metrics, but it is not as simple as just having one metric.

Imagine you want to predict tornadoes in the next hour. We could have accuracy or F1 score as one metric, but there’s more to life than just being right all the time. You might also want to consider the complexity of the model, like how sparse it is, or even the average time it takes to make a prediction (because nobody likes waiting, especially if there’s a tornado looming).

There are two options to accomplish this:

- Combine both metrics into one. This only works when you can quantify your desired trade-off between the two metrics.

- Use multi-objective optimization for hyperparameter tuning and model selection.

Here are a couple of metrics that you can optimize for, partially based on [9]:

- Predictive Performance: Can be quantified using measures such as F1-Score, AUC, or mean absolute error.

- Interpretability [10]: Metrics such as sparsity, interaction strength, and complexity of the main effects.

- Fairness: Measured as disparate impact, equalized odds, or calibration.

- Robustness: Measured via performance under perturbations, presence of adversarial examples, and under distribution shifts.

- Alignment with physics-based models [11]

- Inference time

- Memory requirements

Let’s say you want to predict a disease outcome using gene expression data. From domain knowledge, you know that only a few genes might be relevant. But if you only optimize for predictive performance, the model might pick up more genes than necessary if it doesn’t hurt the performance bottom line. By also optimizing for sparsity in this example, you can find a model that satisfies both performance and sparsity1. We’ve already discussed constraints in a previous section, but multi-objective optimization offers a slightly different approach to constraints. Optimizing for multiple objectives produces multiple “optimal” models. Each model is optimal regarding a different trade-off between the objectives, also known as “Pareto-efficient” models. Having multiple models can be both a bug and a feature. It is a bug because you typically want just one model. It is a feature because the set of Pareto efficient models allows you to choose the trade-off between the metrics that make sense based on domain knowledge. And that’s easier than having to decide beforehand how to balance both performance metrics.

Again, we can observe the two-way street: Whether you collapse the objective into one function or embrace the multiplicity of models with different trade-offs – multi-objective optimization will enable you to explore different trade-offs and therefore evaluate domain knowledge.

8.7 Use the right inductive biases and learn from them

The prior assumptions a machine learning algorithm imposes on the model to help generalize to unseen data.

Imagine a machine learning algorithm that produces “models” that only memorize training data. To “predict”, this model would have to check whether the new data point is in the training data. If so, the model can return the outcome for the training data point. If not, it can’t make a prediction. But what if a training data point is almost identical to the new one? Could you just use the same prediction? The model could then just return the prediction of the nearest data point. Or, to make it robust, the average prediction of multiple close data points from the training data. That’s the idea behind K-nearest neighbors.

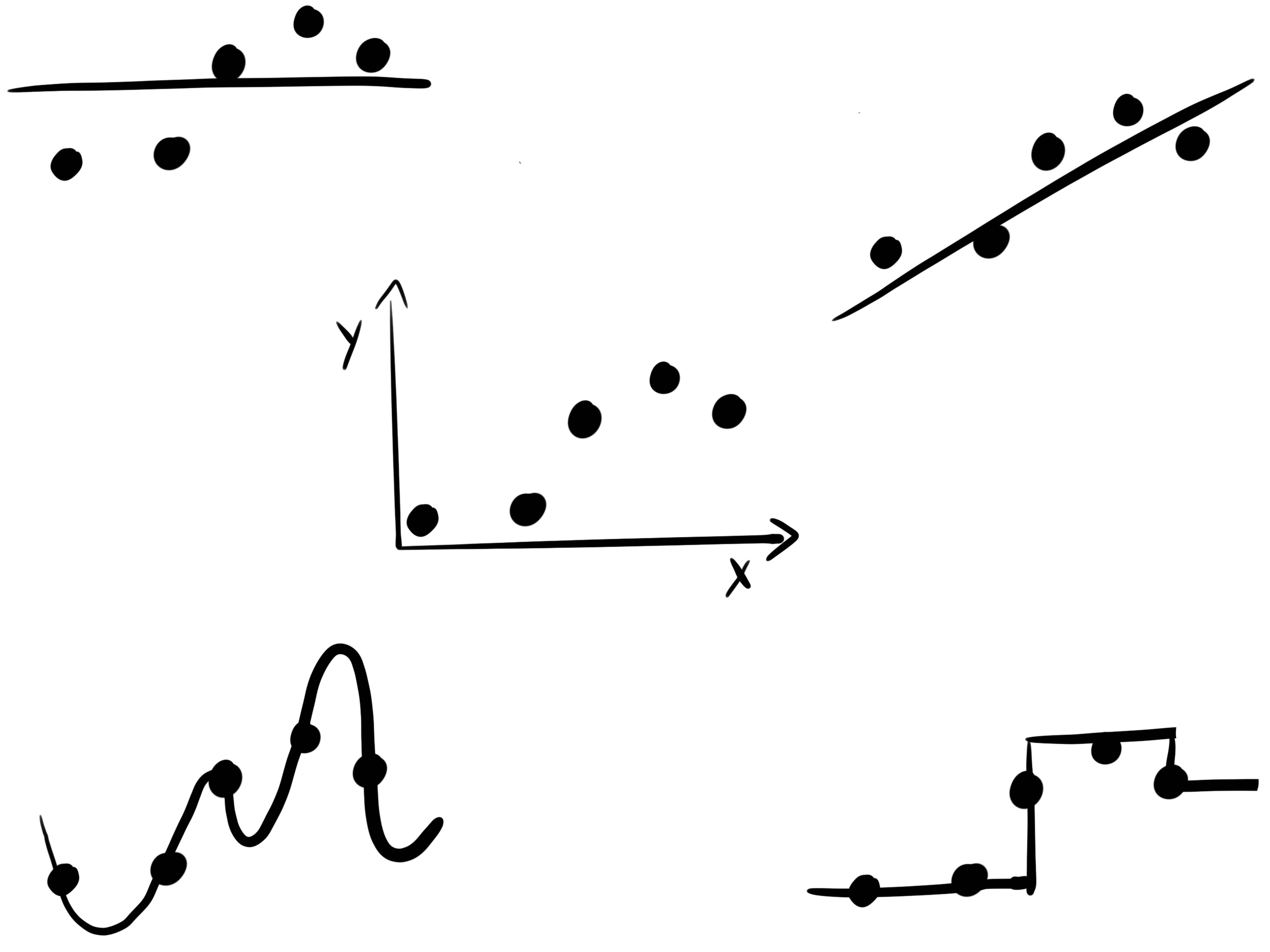

But you could also have a model that makes different assumptions about how to generalize to new data, see Figure 8.2. If you get a new data point and one of the features is slightly different from one of the training data points, you could interpolate linearly between the neighboring data points for this new data point. If you do that for all features and training data points, then you end up with a linear regression model.

Or you could use another strategy: Divide your data into partitions which are defined by binary decisions in the features. The partitions are chosen so that the training data points within have a similar outcome. This comes with a beautiful generalization strategy: For each new data point, you just have to check in which data partition it falls, based on the feature values, and then take the average outcome of all the training data points as a prediction. This is the strategy that decision trees use.

All these examples show that each model class, like trees, k-nearest-neighbors, and linear models, comes with different inductive biases. The inductive bias is an instruction on how to generalize the relation between inputs and outputs to unseen data.

Try out models with different inductive biases. If a certain inductive bias seems to stand out, study how it relates to the studied phenomenon. Can you learn about your problem at hand?

A way to understand inductive biases better is by using tools from interpretable machine learning (see Chapter 9). Interpretable machine learning can also be used to extract insights from the models. Domain knowledge often comes in the form of causal knowledge (see Chapter 10).

Multi-objective optimization is not the only method to introduce sparsity into your model. Generally, regularization is employed, implying that only models with inherent sparsity, such as LASSO (a sparse linear model), are considered.↩︎